Securing agentic LLMs as they meet the web

Agents

When Large Language Models (LLMs) start to act, not just chat, the security stakes shift. Agents that plan, call tools and browse the web can turn a prompt failure into a real action. A new survey takes a clear look at that change and argues we are moving from securing single agentic systems to securing an interconnected Agentic Web.

The paper sets out a threat taxonomy that tracks where and how things go wrong. Prompt abuse covers crafted inputs or delegation messages that steer agents off course. Environment injection hides instructions in web pages, files, user interfaces or multimodal content, waiting for an agent to read them. Memory attacks poison or exfiltrate from persistent stores, creating long-lived influence. Toolchain abuse treats third-party tools and API calls as a supply chain, where one weak link or an unsafe composition can cause harm. Model tampering and backdoors introduce conditional failures that standard tests may miss. Agent network attacks push payloads between agents, producing cascades that exceed the impact of a single compromise.

These problems grow once agents operate across domains. Injection payloads can propagate via summaries or delegated subtasks. Shared or organisational memory makes poisoning stick. Safe-looking tool outputs can combine into risky sequences. A heterogeneous, multi-model ecosystem makes backdoors and conditional failures harder to spot. In other words, the blast radius grows with delegation chains and cross-domain interactions.

What works today, and what does not

The survey reviews defences mapped to the agent pipeline. Prompt hardening and safety-aware decoding can reduce trivial subversion but will not stop determined adversaries. Privilege control for tools and APIs, including least privilege and mediation gateways, limits what an agent can touch. Runtime monitoring can catch suspect actions in flight. Continuous red-teaming helps keep pace with changing attack tactics. None of these alone is enough; layered controls are a practical necessity.

At web scale the authors argue for protocol-level mechanisms. Interoperable identity and authorisation for agents and tools would let systems apply explicit delegation constraints that machines can verify. Provenance and traceability for artefacts, messages and plans would support auditing and containment when things go wrong. Ecosystem-level response capabilities, including quarantine and revocation, become as important as single-system mitigations because incidents will propagate along delegation paths.

Implications for practice and policy

This is a survey, not new empirical work. Many defences cited are heuristics tested on small setups. Formal guarantees are thin, and evaluation under adaptive adversaries is early. That caution matters for practitioners building agent workflows and for policymakers contemplating rules or standards.

Still, the direction of travel is clear. If you deploy agents, treat tools and memories as a supply chain. Enforce least privilege at the tool and API layer. Instrument runtime monitoring and keep red-teaming continuous. Where you can, require machine-verifiable identities for agents and tools, explicit delegation boundaries, and provenance metadata on outputs. Those are not silver bullets, but they create the preconditions for limiting blast radius and doing credible post-incident forensics.

For governance, the priority is stitching these pieces into shared norms: interoperable identity and authorisation, provenance that survives across vendors, and response processes that work across organisational lines. It is hard work, but feasible. We did it for aspects of the web with security protocols and incident coordination. Agent ecosystems will need their own equivalents, and the sooner we converge on them, the safer this emerging Agentic Web will be.

Additional analysis of the original ArXiv paper

📋 Original Paper Title and Abstract

From Secure Agentic AI to Secure Agentic Web: Challenges, Threats, and Future Directions

🔍 ShortSpan Analysis of the Paper

Problem

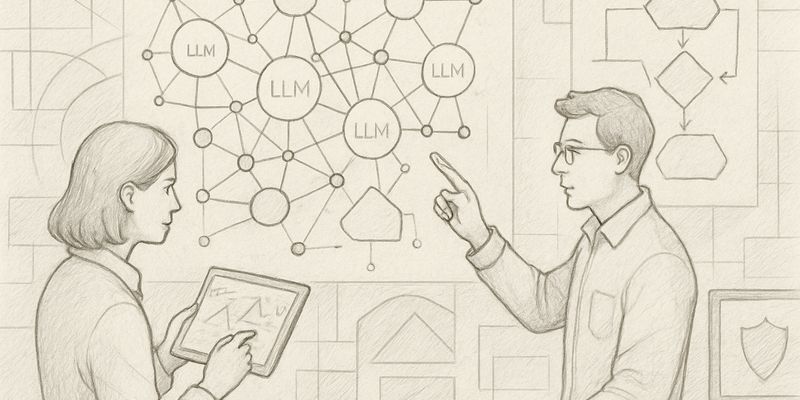

This paper surveys emerging security risks as large language models are deployed as agentic systems that plan, remember, and act through tools, persistent memory and web interactions. The central concern is that failures are no longer confined to unsafe text generation but can produce real-world harm when agents execute actions, call APIs, store poisoned memories, or process untrusted web content. The paper frames a transition from securing individual agentic AI systems to securing an interconnected Agentic Web of cooperating agents and services.

Approach

The authors present a component-aligned threat taxonomy and review defence strategies mapped to agent pipelines. Threat classes are grouped by injection point: prompt abuse, environment injection, memory attacks, toolchain abuse, model tampering and agent network attacks. Defence families include prompt hardening, model robustness and safe decoding, privilege control and tool mediation, runtime monitoring, continuous red-teaming and protocol-level security for identity, authorisation and provenance. For each category the survey explains how threats escalate when agents operate across domains, use delegation chains and rely on protocol-mediated ecosystems.

Key Findings

- Prompt abuse is a broad family of attacks where crafted inputs or delegation messages override or subvert agent objectives; when agents can act via tools, such subversion can cause real actions beyond text-level deviations.

- Environment injection arises from hidden instructions in web pages, files, UIs or multimodal content; in a web setting such injections scale across workflows and can propagate via summaries or delegated subtasks.

- Memory attacks and poisoning of persistent stores can produce long-lived influence on planning and disclosure risks; shared or organisational memory magnifies this persistence.

- Toolchain abuse and third-party tool compromise create supply-chain-like risks: safe-seeming tools or compositions can enable dangerous chains of actions even if individual outputs appear benign.

- Model tampering and backdoors permit conditional failure modes that evade standard tests and become harder to detect in diverse, multi-model ecosystems.

- Agent network attacks allow injection payloads to travel between agents, producing cascades and systemic incidents that exceed single-agent impact.

- Layered defences are necessary: prompt hardening, safe decoding, least-privilege tool controls, runtime monitoring and continuous red-teaming complement one another but none suffice alone.

- At web scale the key missing primitives are interoperable identity and authorisation, provenance and traceability, and ecosystem-level response capabilities such as quarantine and revocation.

Limitations

The survey synthesises existing studies rather than presenting new empirical results; effectiveness claims depend on prior work and benchmarks. Many defences were validated on individual agents or limited scopes, so their scalability to large, heterogeneous agent ecosystems remains uncertain. Formal guarantees are limited: several proposed defences are heuristics or architectural proposals rather than provable controls, and evaluation under adaptive adversaries is still nascent.

Why It Matters

As agents move from isolated tools to an interconnected Agentic Web, security shifts from hardening single models to governing an ecosystem. Practical implications include the need for machine-verifiable identities and explicit delegation constraints, provenance metadata and audit trails for delegated artefacts, protocol gateways that mediate tool calls and enforce least privilege, and monitoring and mitigation workflows that operate across delegation chains. These measures are essential to prevent propagation, limit blast radius from compromised components, and support post-incident forensics. The survey highlights priorities for research, evaluation and standards that will help practitioners and policymakers design safer, more trustworthy agent ecosystems.