LLM agents break trust boundaries; favour deterministic controls

Agents

Frontier AI agents are not just chatbots with a clipboard. Perplexity’s submission to a US standards request argues that agent architectures change the old separation between code and data, blur authority boundaries, and make execution less predictable. If you run agents in production, that translates into new ways to lose confidentiality, integrity and availability.

The paper is grounded in operating large, general-purpose agents. It charts attack surfaces across tools, connectors, hosting boundaries and multi-agent setups. The headline risks are familiar but nastier when the system accepts goals and builds workflows on the fly.

- Indirect prompt injection from retrieved content steering tool use.

- Confused-deputy behaviour across multi-agent chains and shared workspaces.

- Cascading failures in long-running, stateful workflows.

Where this bites in real infrastructure

Endpoints and browsers: Agents that read web pages or email will ingest untrusted content that can act like executable control. Treat anything the agent retrieves as hostile. Isolate the browsing and file-handling environment, strip implicit trust, and avoid giving default filesystem or credential access. Long-lived sessions and shared caches increase blast radius.

Data pipelines and retrieval: If your agent builds plans from retrieved knowledge, poisoned documents can redirect actions. The paper’s point on code-data blur is a warning: prompts and outputs become control flow. Add content sanitisation and schema checks before tool execution, version your corpora, and audit which document led to which action so that rollback is possible when a bad page sneaks in.

Tooling and model serving: Orchestrators that let models pick tools need a deterministic backstop. The authors highlight allowlists, strict schema validation, rate limits, and explicit human approval for high-consequence actions as the only mature line that consistently holds. Keep business-logic policies out of the prompt and in code that enforces them. Log each tool call with inputs, outputs and decision rationale to support incident response.

Code execution and sandboxes: Weak sandboxes remain a primary vector. The defence stack the paper describes only works if your execution environment is actually isolated. No shared writeable volumes across tenants, no default network egress, and time-bounded runs. If you cannot explain what the agent is allowed to execute and where it can send bytes, you do not have a sandbox.

GPU clusters and serving infra: The orchestration layer is another trust boundary. Multi-tenant clusters should assume agents will try unexpected sequences and resource spikes. Apply deterministic resource limits and kill-switches at the scheduler, not just in prompts. Hosting choices matter: cloud adds network boundaries and exposure; on-prem trims internet risk but raises insider and configuration risk, as the paper notes.

Secrets and connectors: Agents chain tools, and each connector widens the blast radius. Scope tokens to the minimum action set, rotate aggressively, and keep secrets out of prompt-visible contexts. Plugin and skill supply chains are an attack surface; verify and monitor them like any third-party integration.

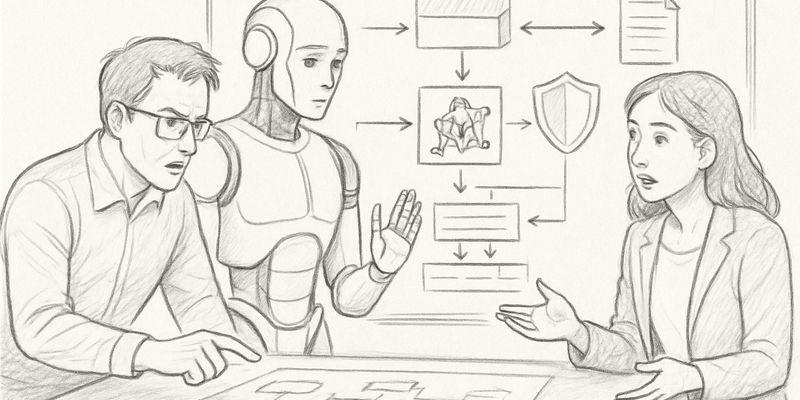

Controls that survive contact with production

The paper groups defences into input-level checks, model-level instruction hierarchies, sandboxed execution and deterministic enforcement. The authors are clear on maturity: the last category is what saves you at 3am. Detectors for prompt injection suffer base-rate false positives and latency costs, and model “hierarchies” are conventions, not guarantees. Use them as hygiene, not as gates.

The incident notes in the paper reinforce this: some failures are plain old application security, including a documented platform flaw and a one-click remote code execution case that did not depend on Large Language Model (LLM) behaviour. Treat the agent stack like a web app plus scheduler, then add the agentic quirks on top.

What is missing is standardisation and measurement. The authors call for realistic, adaptive security benchmarks, better delegation and privilege models for agents, and guidance for secure multi-agent design aligned with established risk frameworks. Until that lands, keep one deterministic layer between the model and anything that can spend money, move data or change state. Your pager will thank you.

Additional analysis of the original ArXiv paper

📋 Original Paper Title and Abstract

Security Considerations for Artificial Intelligence Agents

🔍 ShortSpan Analysis of the Paper

Problem

This paper examines security risks introduced by frontier AI agents, arguing that agent architectures fundamentally change core assumptions about code-data separation, authority boundaries, and execution predictability. Those shifts create new confidentiality, integrity and availability failure modes. The authors emphasise that agentic systems accept high-level goals, dynamically construct workflows, and chain tool calls, which increases opportunities for data exfiltration, unauthorised actions, cascading failures in long-running workflows and harder-to-reason-about states compared with traditional software.

Approach

The analysis draws on Perplexity’s operational experience with large-scale, general-purpose agent systems and surveys attack surfaces, threat actors and failure modes across tools, connectors, hosting boundaries and multi-agent coordination. It highlights concrete threat classes such as indirect prompt injection, confused-deputy behaviour and workflow cascades, evaluates existing mitigations as a layered defence stack (input-level and model-level measures, sandboxed execution and deterministic policy enforcement), and identifies standards and research gaps including benchmarks, delegation and privilege policy models, and secure multi-agent design aligned with NIST risk management principles.

Key Findings

- Agent architectures blur the line between code and data so plaintext prompts and model outputs can act as executable control, increasing injection and logic‑confusion risks.

- High‑risk threat classes include indirect prompt injection where adversarial content retrieved during normal operation manipulates agents, classic confused‑deputy vulnerabilities in multi‑agent chains, and cascading failures in long multi‑step workflows.

- Attack surfaces span tool selection and execution logic, web‑grounded ingestion, skill and plugin supply chains, orchestrators and shared workspaces, and hosting/exposure choices such as gateways, web UIs and webhooks.

- Deployment and hosting choices materially change exposure: cloud hosting adds network trust boundaries, on‑prem or local deployments reduce internet exposure but raise insider and configuration risks; weak sandboxing for code execution is a key vector.

- Defences are best organised as defence‑in‑depth: input‑level detection and sanitisation, model‑level instruction hierarchies and embedding tricks, system‑level sandboxing and execution monitoring, and a deterministic last line of defence (allowlists, rate limits, schema validation). Deterministic controls are currently the most mature in production.

- Operational constraints impede some defences: prompt‑injection detectors face base‑rate false positive problems and performance overheads; model‑level hierarchies remain conventions rather than verifiable guarantees; fully specifying legitimate control flows in open‑ended systems is an open challenge.

- Concrete incidents illustrate hazards, including documented vulnerabilities in the OpenClaw platform and a one‑click remote code execution case that did not depend on LLM behaviour.

- There is a gap in realistic, adaptive security benchmarks and in policy models for delegation, privilege control and inter‑agent trust.

Limitations

Many defences are probabilistic and cannot assure security alone. Input detectors suffer from false positives and cost/latency trade‑offs. Model‑level instruction hierarchies are learned conventions rather than enforced guarantees. System‑level sandboxing depends on correctly identifying legitimate control flows, which is difficult in open‑ended agent use. Research maturity varies: deterministic enforcement and human‑in‑the‑loop controls are mature, other approaches are early or experimental.

Why It Matters

Practitioners must treat agent design and hosting decisions as first‑order security factors and adopt layered defences with at least one deterministic enforcement layer. Standards for inter‑agent communication, adaptive adversarial benchmarks, access control models that combine role‑based and risk‑adaptive approaches, and research into usable human‑agent governance are urgent priorities. These measures will reduce exfiltration, unauthorised actions, privilege escalation across agents and availability attacks, and support auditing and recovery in increasingly agentic systems.