LLM Agents Shift Risk to Runtime Supply Chains

Agents

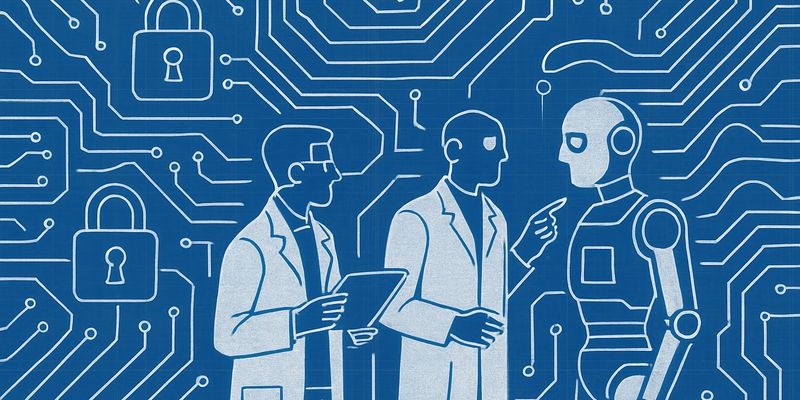

AI agents promise hands‑off automation. The security reality is less glossy. When you let a Large Language Model (LLM) choose what to read, what to remember and which tools to run, the attack surface moves from build artefacts to whatever the agent touches at runtime. That is where attackers will stand, and it is where many teams are not looking.

A new systematisation of these risks argues that agentic AI is a runtime supply chain problem. Rather than yet another model jailbreak catalogue, it focuses on the environment an agent assembles on the fly: retrieved documents, shared memories, tool registries and APIs. The authors frame the adversary as “Man‑in‑the‑Environment” supplying tainted inputs and capabilities. It is not experimental work, but it does give practitioners a cleaner map of where things go wrong.

Data and tool supply chains

On the data side, two classes of attack dominate. Transient context injection happens within a session: a retrieved page or message carries instructions that override the agent’s policies or steer it off task. Persistent poisoning targets what the agent stores or relies on across sessions. Populate a knowledge base or long‑term memory with persuasive falsehoods and the agent will misbehave tomorrow without fresh prompting. Prior results show you do not need to poison much if you optimise for what retrieval will fetch.

The tool side is a three‑act play. Discovery attacks trick the agent into finding the wrong capability, for example via hallucination squatting or semantic masquerading where a malicious tool looks like the right one by description. Implementation attacks hide backdoors in the tool or its transitive dependencies. Invocation attacks exploit over‑privileged calls or argument injection to make a legitimate tool do illegitimate work. None of this touches model weights, yet the effects look like classic privilege escalation.

Then there is the Viral Agent Loop. If agent outputs are later discoverable inputs, triggers can propagate across systems like semantic worms. Post a crafted snippet to a shared document, another agent ingests it, repeats or amplifies the behaviour, and the cycle continues. No buffer overflow required, just credulous automation.

Defences: some promise, many gaps

The paper advocates a Zero‑Trust Runtime Architecture. Treat context as untrusted control flow. Bind tools and data to cryptographic provenance and signed registries rather than trusting a model’s semantic judgement. Use instruction hierarchies and intent verification so that high‑risk actions need explicit confirmation. Verify‑before‑commit for memory writes. Track taint through the pipeline. Split an Auditor that reasons about plans from a Worker that executes them with least privilege.

All sensible. Also incomplete. Statistical filters and ad hoc policies will not catch graph poisoning or transitive dependency abuse, but the proposed alternatives face hard problems too. Taint analysis over non‑deterministic reasoning is brittle. Standardising and governing resilient registries is a socio‑technical slog. Evaluating these defences in open, persistent environments is still mostly an aspiration.

Does this matter? Yes, if you are wiring agents to corporate documents, SaaS APIs or other people’s data. Many teams overinvest in fine‑tuning and underinvest in runtime controls. This work will not hand you a turnkey blueprint, but it does put the risk in the right place and names the failure modes you should threat model: context injection, memory poisoning, tool discovery and invocation abuse, and recursive propagation. In practice, that means default‑deny tool access, tight scopes and allowlists, provenance where you can get it, and a habit of testing agents as if an attacker controls the environment around them. The open question is whether we can make these controls usable at the pace product teams want. Right now, the environment, not the model, is your soft underbelly.

Additional analysis of the original ArXiv paper

📋 Original Paper Title and Abstract

Agentic AI as a Cybersecurity Attack Surface: Threats, Exploits, and Defenses in Runtime Supply Chains

🔍 ShortSpan Analysis of the Paper

Problem

The paper examines how autonomous agentic systems built on large language models shift the security boundary from build time to inference time. Agents dynamically retrieve data, update memory and invoke external tools, so untrusted runtime inputs and probabilistic capability resolution become primary attack surfaces. This enables adversaries to manipulate behaviour, leak data or trigger harmful side effects without compromising model weights or infrastructure, and creates new systemic risks when agent outputs re-enter the environment.

Approach

The authors systematise runtime risks by framing agent execution as a supply chain and by defining a Man-in-the-Environment adversary who supplies malicious runtime artifacts. They organise threats into two linked chains: the Data Supply Chain (transient context injection and persistent memory poisoning) and the Tool Supply Chain (three phases: Discovery, Implementation and Invocation). They introduce the Viral Agent Loop concept to capture recursive propagation across agents and propose a Zero-Trust Runtime Architecture with concrete defensive primitives. The paper synthesises prior empirical results and illustrative examples rather than presenting new experimental data.

Key Findings

- Inference-time dependencies dominate risk: agents assemble context and capabilities at runtime, so manipulated documents, registries or APIs can alter decision-making without touching code or model weights.

- Data supply-chain attacks bifurcate by persistence: within-session attacks (indirect prompt injection and many-shot in-context poisoning) can immediately override safety constraints, while retrieval and long-term memory poisoning produce persistent misbehaviour across sessions.

- Small corpus poisoning can be highly effective: retrieval-optimised triggers show that poisoning a tiny fraction of an external corpus can yield very high attack success rates for targeted queries.

- Tool supply-chain compromise occurs in three phases: Discovery (hallucination squatting and semantic masquerading), Implementation (hidden backdoors and transitive dependency exploitation) and Invocation (over-privileged calls and argument injection), each capable of producing harmful side effects even when other invariants hold.

- The Viral Agent Loop enables semantic, self-propagating attacks: agent outputs that become discoverable inputs can cause chains of compromise across agents without exploiting low-level software flaws, analogous to worms at the instruction level.

Limitations

The work is a systematisation and synthesis of prior studies rather than a controlled empirical evaluation. It assumes adversaries do not modify model weights or hosting infrastructure and focuses on supply-chain style manipulations. Several defensive proposals remain conceptual and face practical challenges: tracking taint through non-deterministic model reasoning, standardising resilient registries, and evaluating defences in open-world, persistent settings.

Why It Matters

Agentic systems transform context into executable control, so defenders must move from static supply-chain thinking to a Zero-Trust Runtime model. Practical implications include adopting instruction hierarchies and intent verification, cryptographically bound registries and signed provenance for tools and data, verify-before-commit protocols, runtime taint analysis and an Auditor-Worker architecture that isolates semantic oversight from execution. These measures aim to prevent semantic manipulation, privilege escalation and self-propagating agentic worms, and to support more robust threat modelling and defence design for AI-driven automation.